An Evaluation of Dichoptic Tonemapping in Virtual Reality Experiences

Martin Mišiak, Tom Müller, Arnulph Fuhrmann and Marc Erich Latoschik

Virtuelle und Erweiterte Realität – 20. Workshop der GI-Fachgruppe VR/AR, 2023, Köln, Germany

Martin Mišiak, Tom Müller, Arnulph Fuhrmann and Marc Erich Latoschik

Virtuelle und Erweiterte Realität – 20. Workshop der GI-Fachgruppe VR/AR, 2023, Köln, Germany

Martin Mišiak, Arnulph Fuhrmann, Marc Erich Latoschik

ACM Symposium on Applied Perception 2023 (SAP ’23)

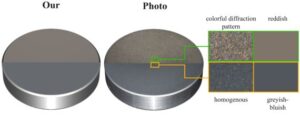

Olaf Clausen, Yang Chen, Arnulph Fuhrmann and Ricardo Marroquim

Computer Graphics Forum, 42: 245-260.

Martin Mišiak, Arnulph Fuhrmann, Marc Erich Latoschik

Proceedings of the 27th ACM Symposium on Virtual Reality Software and Technology. 2021

Nigel Frangenberg, Kristoffer Waldow and Arnulph Fuhrmann

Proceedings of 28th IEEE Virtual Reality Conference (VR ’21), Lisbon, Portugal

Ursula Derichs, Martin Mišiak and Arnulph Fuhrmann

Virtuelle und Erweiterte Realität – 17. Workshop der GI-Fachgruppe VR/AR, 2020, Trier, Germany

Sven Hinze, Martin Mišiak and Arnulph Fuhrmann

Virtuelle und Erweiterte Realität – 17. Workshop der GI-Fachgruppe VR/AR, 2020, Trier, Germany

In this paper, a remote rendering system for an AR app based on Unity is presented. The system was implemented for an edge server, which is located within the network of the mobile network operator.

We propose an easy to integrate Automatic Speech Recognition and textual visualization extension for an avatar-based MR remote collaboration system that visualizes speech via spatial floating speech bubbles. In a small pilot study, we achieved word accuracy of our extension of 97% by measuring the widely used word error rate.

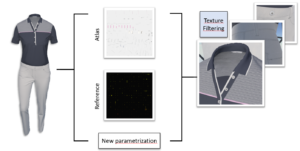

This paper investigates the effects of normal mapping on the perception of geometric depth between stereoscopic and non-stereoscopic views.

Kristoffer Waldow, Arnulph Fuhrmann and Stefan M. Grünvogel In: Proceedings…

We investigate the influence of four different audio representations on visually induced self-motion (vection). Our study followed the hypothesis, that the feeling of visually induced vection can be increased by audio sources while lowering negative feelings such as visually induced motion sickness.

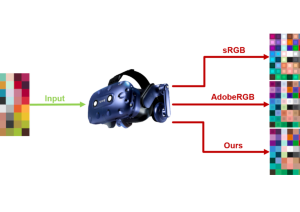

When rendering images in real-time, shading pixels is a comparatively expensive

operation. Especially for head-mounted displays, where separate images are rendered for

each eye and high frame rates need to be achieved. Upscaling algorithms are one possibility

of reducing the pixel shading costs. Four basic upscaling algorithms are implemented in a

VR rendering system, with a subsequent user study on subjective image quality. We find

that users preferred methods with a better contrast preservation.

In this paper, we present a Mixed Reality telepresence system that allows the connection of multiple AR or VR devices to create a shared virtual environment by using the simple MQTT networking protocol. It follows a subscribe-publish pattern for reliable and easy platform independent integration. Therefore, it is possible to realize different clients that handle communication and allow remote collaboration. To allow embodied natural human interaction, the system maps the human interaction channels, gestures, gaze and speech, to an abstract stylized avatar by using an upper body inverse kinematic approach. This setup allows spatially separated persons to interact with each other via an avatar-mediated communication.